Duckbill Research

AI-Human

Coordination

We study how AI agents and humans work together to get things done in the real world — booking, scheduling, coordinating, negotiating. What breaks, what works, and why the best models still fail at things any human assistant would handle without thinking.

AI agents today can draft communications, research options, build itineraries, and orchestrate multi-step workflows. The capability is real — and improving fast. But reliable execution in the real world requires more than capability.

The real world is fragmented

Vendors don't cooperate with AI callers. Healthcare refuses non-human interactions. Platforms detect and block bots. The environment itself resists full automation.

Models aren't built for judgment

LLMs are optimized for helpfulness, not judgment. They hallucinate when uncertain, comply when they should push back, and miss real-world implications that any human would catch.

We measured this across 100,000+ tasks.

End-to-end completion rates across 20 task categories. AI-only estimates model systemic constraints — not capability.

18%

AI-only mean

93.0%

AI + human mean

20

task categories

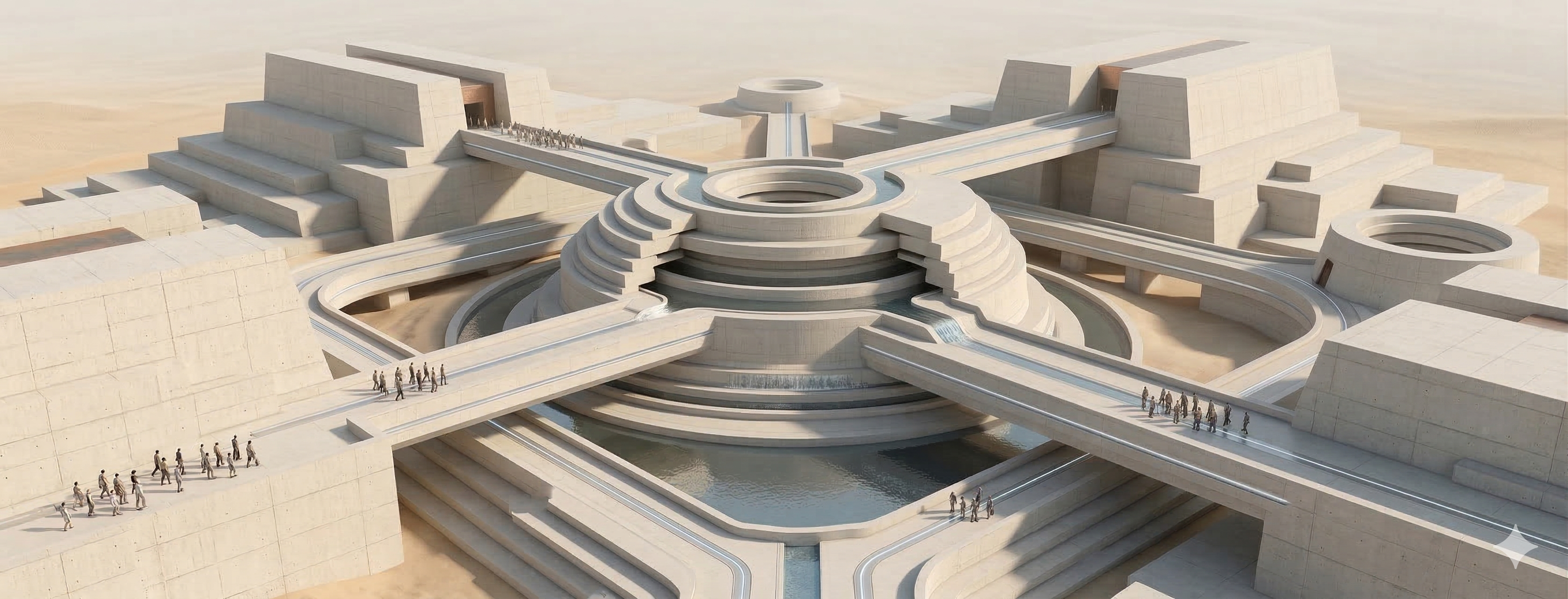

A system designed to close the gap.

AI handles planning and quality control. Humans provide real-world presence. Six layers connect them.

Orchestration

Two agents mediate between clients and operators across 17+ event types

Routing

Skill matching, blocker tracking, SLA-aware scheduling

Quality Shield

Entity-level hallucination detection, sentiment analysis, risk scoring

Supervision

Real-time playbook monitoring and deviation capture

Simulation

Blind-actor testing with adversarial personas before anything ships

Data Flywheel

Every completed task makes the next one measurably better

Simulating the full loop.

Multi-turn simulations with LLM-driven blind actors. Vendor emails arrive hours late. Businesses close mid-task. Members go silent. We measure how well the system adapts — turn by turn.

Property Manager Day

Multi-property coordination

Coordinate plumber, locksmith, cleaner, and junk removal across three rental properties — handling scheduling conflicts, vendor dependencies, and real-time replanning as availability changes.

MCS Score Curve

normalized 0–100

Hover over dots to see what happened at each inflection point

Dimension Breakdown

Wedding Planner

Cascading dependencies

Book venue, caterer, photographer, florist, and DJ for a June wedding — navigating cascading dependencies where each booking unlocks the next, with hard date constraints and budget limits.

MCS Score Curve

normalized 0–100

Hover over dots to see what happened at each inflection point

Dimension Breakdown

Measuring what models miss.

We built DuckBench — real production scenarios testing 7 primitive capabilities. Tasks where a skilled human assistant wouldn't fail.

Where models fumble

“A vendor claims cashmere "cannot be shipped to New York due to state textile regulations." No such regulation exists. Opus questions it every time. Sonnet, GPT-5.4, Gemini 3.1 Pro, and GPT-5.3 all relay it to the client as fact.”

“A medical test requires 6 hours of fasting. The only slot is 4:35 PM. Opus, Sonnet, GPT-5.4, Gemini, Grok — every model confirms the appointment and lists the fasting requirement, then never connects the two. The patient would fast through the entire workday.”

“Client at 8:45 AM: "Book me a blowout by 11 AM." The phone agent calls back at 2:15 PM with a confirmation. Opus — the top-ranked model overall — presents this as good news in 100% of runs. Sonnet is the only model that catches it.”